NLP

UNIT-2

Grammars and Parsing – Top-Down and Bottom-Up Parsers

1. Grammars in Natural Language Processing

Definition

A grammar is a set of rules that describe how words combine to form valid sentences in a language.

In Natural Language Processing (NLP), grammars are used to:

Check sentence structure

Analyze syntax

Build parse trees

A commonly used grammar is Context Free Grammar (CFG).

Example Grammar Rules

S → NP + VP

NP → Det + N

VP → V + NP

Example sentence:

The boy eats an apple

Parse using grammar rules:

S

→ NP + VP

NP

→ The boy

VP

→ eats an apple

These rules help the system understand sentence structure.

2. Parsing in NLP

Definition

Parsing is the process of analyzing a sentence according to grammar rules to determine its syntactic structure.

The result of parsing is a parse tree.

Example:

Sentence:

The boy reads a book

Parsing identifies:

Subject → boy

Verb → reads

Object → book

3. Types of Parsers

There are two main parsing approaches:

Top-Down Parsing

Bottom-Up Parsing

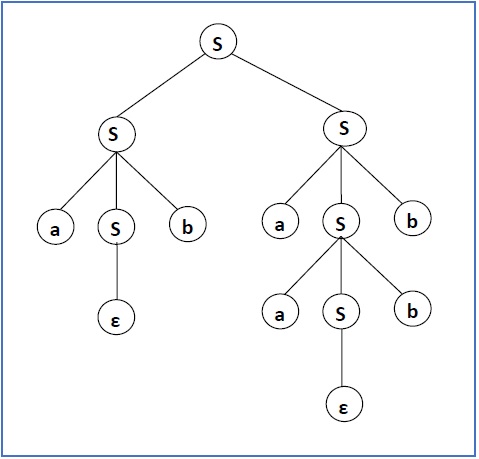

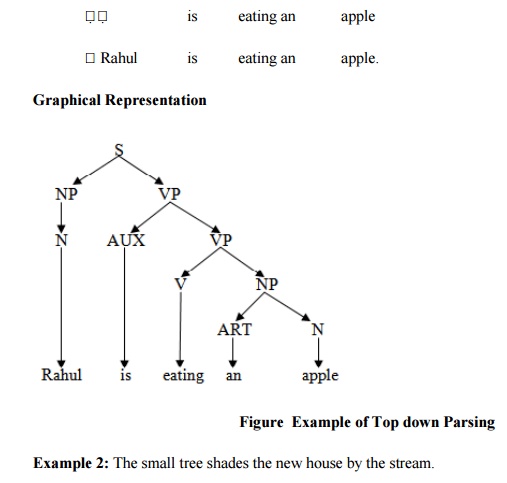

4. Top-Down Parser

Definition

A Top-Down Parser starts from the start symbol (S) and tries to generate the input sentence using grammar rules.

It expands the parse tree from root to leaves.

Process

Start with:

S

Apply grammar rules step by step until the sentence is formed.

Example:

Sentence:

The boy eats an apple

Steps:

S

→ NP + VP

NP

→ Det + N

→ The boy

VP

→ V + NP

→ eats an apple

Advantages

Easy to understand

Simple implementation

Disadvantages

Cannot handle left-recursive grammars

May generate unnecessary trees

5. Bottom-Up Parser

Definition

A Bottom-Up Parser starts with the input words and gradually builds the parse tree towards the start symbol (S).

It constructs the tree from leaves to root.

Process

Sentence:

The boy eats an apple

Steps:

Identify words

Combine them into phrases

Build larger structures

Example:

The + boy → NP

eats + apple → VP

NP + VP → S

6. Shift-Reduce Parsing (Bottom-Up Method)

One common bottom-up method is Shift-Reduce Parsing.

Steps:

Shift – read input words

Reduce – apply grammar rules to combine words

Example:

Sentence:

The boy runs

Shift → The

Shift → boy

Reduce → NP

Shift → runs

Reduce → VP

NP + VP → S

7. Differences Between Top-Down and Bottom-Up Parsing

| Feature | Top-Down Parser | Bottom-Up Parser |

|---|---|---|

| Parsing Direction | Root → Leaves | Leaves → Root |

| Start Point | Start symbol (S) | Input sentence |

| Tree Construction | From top | From bottom |

| Efficiency | May generate unnecessary trees | More efficient |

| Example Method | Recursive descent | Shift-reduce |

8. Importance of Parsing in NLP

Parsing is important for:

Understanding sentence structure

Machine translation

Grammar checking

Question answering systems

Chatbots

Short Exam Answer (10 Marks)

Grammars define the rules that describe how words combine to form valid sentences in a language. In NLP, grammars such as Context Free Grammar are used to analyze sentence structure. Parsing is the process of analyzing a sentence using grammar rules to produce a parse tree. Two main parsing approaches are Top-Down and Bottom-Up parsing. Top-Down parsing starts from the start symbol and generates the sentence using grammar rules, while Bottom-Up parsing starts from input words and builds the parse tree towards the start symbol. Both methods help NLP systems understand syntactic structure.

✅ If you want, I can also explain the next Unit-2 topics (very important for exams):

Transition Network Grammars

Feature Systems and Augmented Grammars

Morphological Analysis and the Lexicon

Augmented Transition Networks (ATN).

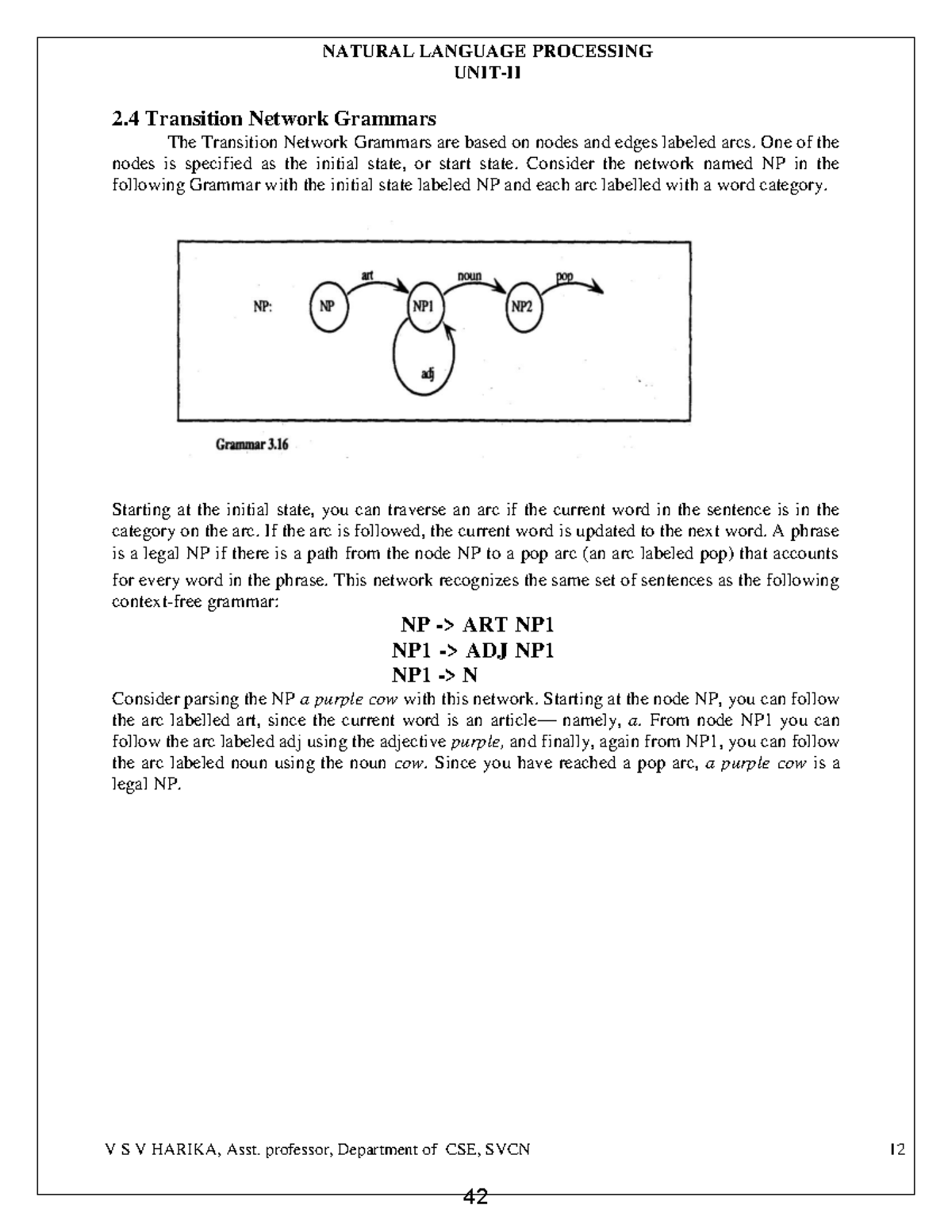

Transition Network Grammars (TNG)

1. Introduction

Transition Network Grammar (TNG) is a grammar representation used in Natural Language Processing (NLP) for parsing sentences.

It represents grammar using a network of states and transitions.

In this method:

Nodes (states) represent positions in parsing.

Arcs (transitions) represent grammatical rules.

The parser moves from one state to another while reading words in a sentence.

2. Basic Idea of Transition Network Grammar

A Transition Network is similar to a finite state machine.

It consists of:

States (nodes)

Transitions (arcs)

Start state

Final state

The parser reads words and moves through the network until it reaches the final state.

If it reaches the final state successfully, the sentence is considered grammatically correct.

3. Example of Transition Network

Example sentence:

The boy reads a book

Grammar structure:

Sentence → Noun Phrase + Verb Phrase

Step-by-step transition

Start State

↓

Noun Phrase (NP)

↓

Verb Phrase (VP)

↓

End State

Example transitions:

Start → NP

NP → VP

VP → End

4. Components of Transition Network Grammar

1. Nodes (States)

States represent positions in sentence parsing.

Example:

Start state

NP state

VP state

Final state

2. Arcs (Transitions)

Arcs represent grammar rules.

Example:

NP → Det + N

Example words:

Det → The

N → boy

3. Labels

Transitions are labeled with grammar symbols or words.

Example:

Det → the

N → boy

5. Types of Transition Networks

1. Simple Transition Networks

These represent grammar rules using basic state transitions.

Example:

Sentence → NP + VP

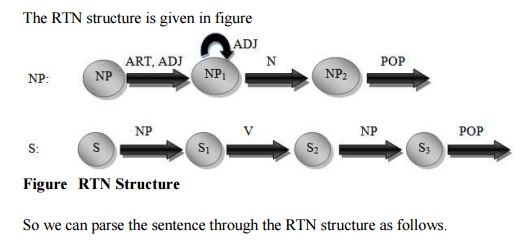

2. Recursive Transition Networks (RTN)

These networks allow recursive structures.

Example:

Sentence → NP + VP

VP → V + NP

Recursive networks can handle complex sentences.

6. Example Parsing

Sentence:

The boy eats apples

Transition steps:

Start

↓

Det → The

↓

N → boy

↓

V → eats

↓

N → apples

↓

End

The parser moves through the network and reaches the final state.

7. Advantages of Transition Network Grammar

Easy representation of grammar rules

Efficient sentence parsing

Useful for syntactic analysis

Can represent recursive structures

8. Limitations

Difficult to represent very complex grammar rules

May require large networks for large languages

9. Applications in NLP

Transition Network Grammars are used in:

Sentence parsing

Syntax analysis

Natural language understanding systems

Early NLP systems

Short Exam Answer (5–10 Marks)

Transition Network Grammar is a method used in Natural Language Processing to represent grammar using a network of states and transitions. It consists of nodes representing states and arcs representing grammatical rules. A parser moves through the network while reading words in a sentence until it reaches the final state. If the final state is reached successfully, the sentence is considered grammatically correct. Transition Network Grammars are useful for syntactic analysis and sentence parsing.

✅ If you want, I can also explain the next Unit-2 topics which are very frequently asked in NLP exams:

Feature Systems and Augmented Grammars

Morphological Analysis and the Lexicon

Parsing with Features

Augmented Transition Networks (ATN).

Feature Systems and Augmented Grammars

1. Introduction

In Natural Language Processing (NLP), simple grammar rules like Context Free Grammar (CFG) are sometimes not enough to represent all language constraints.

To handle additional information such as:

Number (singular/plural)

Gender

Person

Tense

we use Feature Systems and Augmented Grammars.

These methods add extra information called features to grammar rules so that the parser can understand language more accurately.

2. Feature Systems

Definition

A Feature System is a method of representing linguistic information using features and their values.

Features describe grammatical properties of words.

Example features:

| Feature | Possible Values |

|---|---|

| Number | singular, plural |

| Gender | masculine, feminine |

| Person | first, second, third |

| Tense | past, present, future |

Example

Sentence:

The boy runs

Features:

boy

Noun

Number = singular

runs

Verb

Number = singular

Tense = present

The feature system checks agreement between words.

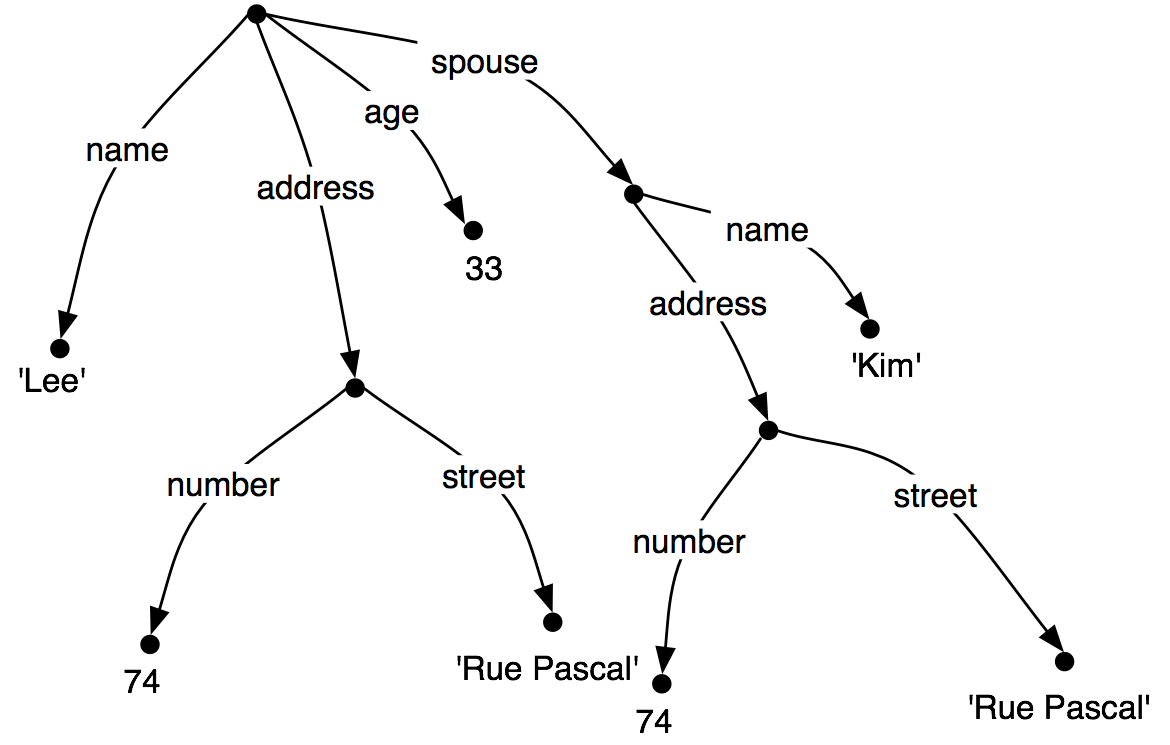

3. Feature Structures

Features are often represented using feature structures.

Example representation:

Word: boy

Category = noun

Number = singular

Gender = masculine

Word: boys

Category = noun

Number = plural

This structure helps the system understand grammatical relationships.

4. Augmented Grammars

Definition

Augmented Grammars are grammars that include additional feature information along with grammar rules.

They extend simple grammar rules to include feature constraints.

Example CFG rule:

S → NP + VP

Augmented grammar rule:

S → NP (number = X) + VP (number = X)

This ensures subject–verb agreement.

5. Example of Augmented Grammar

Sentence:

✔ The boy runs

NP → singular

VP → singular

Sentence is correct.

Sentence:

✘ The boy run

NP → singular

VP → plural

Grammar rule fails because number agreement is incorrect.

Augmented grammars help detect such errors.

6. Attribute Value Matrix (AVM)

Features are often represented using Attribute Value Matrices.

Example:

Word: runs

Category: Verb

Tense: Present

Number: Singular

Person: Third

This structured representation helps in parsing and grammar checking.

7. Advantages of Feature Systems and Augmented Grammars

Represent detailed linguistic information

Ensure agreement between words

Improve syntactic analysis

Help in accurate sentence parsing

8. Applications in NLP

Used in:

Grammar checking systems

Natural language parsers

Machine translation

Speech processing systems

Short Exam Answer (5–10 Marks)

Feature systems represent linguistic information using features such as number, gender, person, and tense. These features help describe grammatical properties of words. Augmented grammars extend traditional grammar rules by adding feature information to ensure grammatical constraints such as subject–verb agreement. For example, a rule may require that the noun phrase and verb phrase have the same number feature. Feature systems and augmented grammars improve syntactic analysis and allow NLP systems to handle complex language structures more accurately.

✅ If you want, I can explain the next very important Unit-2 topics (often asked in exams):

Morphological Analysis and the Lexicon

Parsing with Features

Augmented Transition Networks (ATN).

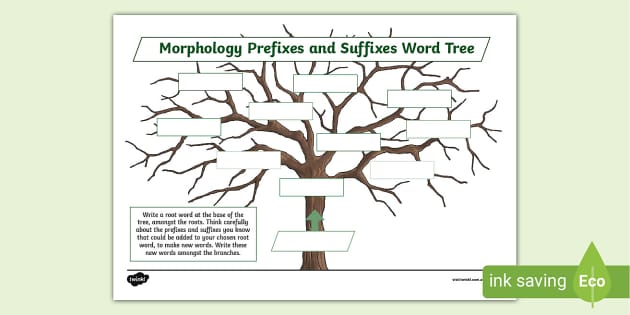

Morphological Analysis and the Lexicon

1. Introduction

In Natural Language Processing (NLP), understanding words is an important step in language processing. Two important concepts used for this purpose are:

Morphological Analysis

Lexicon

Morphological analysis studies the internal structure of words, while the lexicon is a dictionary that stores information about words.

2. Morphological Analysis

Definition

Morphological analysis is the process of analyzing a word into its basic components called morphemes.

A morpheme is the smallest unit of meaning in a language.

Types of Morphemes

1. Root Morpheme

The main part of the word that carries the basic meaning.

Example:

play, happy, teach

2. Prefix

A morpheme added at the beginning of a word.

Examples:

un + happy → unhappy

re + write → rewrite

3. Suffix

A morpheme added at the end of a word.

Examples:

play + er → player

happy + ness → happiness

Example of Morphological Analysis

Word:

Unhappiness

Breakdown:

un + happy + ness

Meaning:

un → not

happy → root word

ness → state or condition

Importance in NLP

Morphological analysis helps:

Identify root words

Understand word variations

Process different word forms

Example:

running → run

played → play

3. Types of Morphological Processes

1. Inflection

Inflection modifies a word to express tense, number, or gender without changing its meaning.

Examples:

run → runs

book → books

play → played

2. Derivation

Derivation creates new words with new meanings.

Examples:

teach → teacher

happy → happiness

4. The Lexicon

Definition

A lexicon is a dictionary of words used in NLP systems.

It stores detailed information about each word.

This information may include:

Word meaning

Part of speech

Morphological structure

Pronunciation

Example Lexicon Entry

Word: runs

Information stored:

Root → run

Part of speech → verb

Tense → present

Number → singular

5. Role of Lexicon in NLP

The lexicon helps NLP systems:

Identify words in sentences

Determine part of speech

Understand word meanings

Perform morphological analysis

Example:

Sentence:

The boy runs fast

Lexicon helps identify:

boy → noun

runs → verb

fast → adverb

6. Relationship Between Morphology and Lexicon

Morphological analysis works together with the lexicon.

Process:

Input word is read

Lexicon checks whether the word exists

Morphological analyzer breaks the word into morphemes

Root word and grammatical information are identified

7. Applications in NLP

Morphological analysis and lexicons are used in:

Spell checking

Machine translation

Information retrieval

Speech recognition

Text processing

Short Exam Answer (5–10 Marks)

Morphological analysis is the process of analyzing words into their smallest meaningful units called morphemes. It helps identify prefixes, suffixes, and root words. Morphological processes include inflection and derivation. The lexicon is a dictionary used in NLP systems that stores information about words such as their meanings, part of speech, and grammatical properties. Morphological analysis and the lexicon together help NLP systems understand and process words effectively.

✅ If you want, I can explain the next important Unit-2 topics:

Parsing with Features

Augmented Transition Networks (ATN)

These are very commonly asked 10-mark questions in NLP exams.

Parsing with Features

1. Introduction

Parsing with Features is a method used in Natural Language Processing (NLP) to analyze sentences using grammar rules that include linguistic features.

Simple grammar rules sometimes cannot capture all grammatical constraints. Therefore, features such as number, gender, person, and tense are added to grammar rules to improve parsing accuracy.

These features help the parser ensure agreement between words in a sentence.

2. What are Features?

Features are properties that describe grammatical information about words.

Common features include:

| Feature | Possible Values |

|---|---|

| Number | singular, plural |

| Gender | masculine, feminine |

| Person | first, second, third |

| Tense | past, present, future |

| Case | subject, object |

These features help NLP systems interpret language correctly.

3. Feature-Based Grammar

In feature-based grammar, grammar rules are extended by attaching feature information.

Example simple rule:

S → NP + VP

Feature-based rule:

S → NP (number = X) + VP (number = X)

This rule ensures subject–verb agreement.

4. Example of Parsing with Features

Sentence:

✔ The boy runs

Features:

NP → boy

Number = singular

VP → runs

Number = singular

The parser checks whether the features match.

Since both are singular, the sentence is grammatically correct.

Sentence:

✘ The boy run

NP → singular

VP → plural

Features do not match, so the parser rejects the sentence.

5. Feature Structures

Features are usually represented using feature structures.

Example:

Word: runs

Category → Verb

Number → Singular

Tense → Present

Person → Third

This structure helps the parser analyze grammatical relationships.

6. Feature Unification

Parsing with features often uses a process called unification.

Definition

Unification is the process of combining feature structures and checking whether their values are compatible.

Example:

NP (number = singular)

VP (number = singular)

Unification succeeds because the values match.

7. Advantages of Parsing with Features

Handles complex grammar constraints

Ensures agreement between sentence elements

Improves parsing accuracy

Allows more detailed language representation

8. Applications in NLP

Parsing with features is used in:

Grammar checking systems

Natural language parsers

Machine translation

Question answering systems

Short Exam Answer (5–10 Marks)

Parsing with features is a method used in Natural Language Processing where grammar rules are extended with linguistic features such as number, gender, person, and tense. These features help ensure grammatical constraints like subject–verb agreement during parsing. Feature structures represent grammatical properties of words, and unification is used to combine and verify feature compatibility. Parsing with features improves syntactic analysis and enables NLP systems to handle complex language structures effectively.

✅ If you want, I can explain the last topic of Unit-2:

Augmented Transition Networks (ATN)

This is one of the most frequently asked 10-mark questions in NLP exams.

Augmented Transition Networks (ATN)

1. Introduction

Augmented Transition Networks (ATN) are an advanced form of Transition Network Grammars used in Natural Language Processing (NLP).

ATN extends Recursive Transition Networks (RTN) by adding:

Registers (memory storage)

Tests (conditions)

Actions (operations)

These additional mechanisms allow ATNs to handle complex language structures more effectively.

ATNs are widely used in syntactic parsing and early natural language understanding systems.

2. Basic Idea of ATN

An Augmented Transition Network represents grammar as a network of states connected by transitions.

While parsing a sentence:

The parser starts at a start state

Moves through the network by reading words

Executes actions and tests

Reaches a final state if the sentence is valid

3. Components of ATN

1. States

States represent positions in the parsing process.

Examples:

Start state

Intermediate state

Final state

2. Arcs (Transitions)

Arcs connect states and represent grammar rules or conditions.

Example:

NP → Det + N

The parser moves from one state to another when the condition is satisfied.

3. Registers

Registers are memory locations used to store information during parsing.

They store:

Subject information

Verb information

Sentence structure

Example:

Register may store:

Subject = boy

Verb = runs

4. Tests

Tests are conditions that must be satisfied before a transition is taken.

Example:

Check whether:

Number(NP) = Number(VP)

This ensures subject–verb agreement.

5. Actions

Actions perform operations such as:

Storing information in registers

Building parse trees

Recording grammatical relationships

4. Example of ATN Parsing

Sentence:

The boy reads a book

Parsing steps:

Start State

↓

Det → The

↓

N → boy

↓

V → reads

↓

N → book

↓

Final State

During parsing, the system stores information such as:

Subject → boy

Verb → reads

Object → book

5. Advantages of ATN

Can handle complex sentence structures

Allows recursion in grammar rules

Uses memory (registers) to store information

Supports syntactic and semantic analysis

6. Limitations

ATN models can become very complex

Difficult to design for large grammars

Requires more computation

7. Applications in NLP

Augmented Transition Networks are used in:

Natural language parsing

Natural language understanding systems

Early AI language systems

Grammar checking

Short Exam Answer (5–10 Marks)

Augmented Transition Networks (ATN) are an extension of Recursive Transition Networks used in Natural Language Processing for syntactic parsing. ATNs represent grammar as a network of states connected by transitions. They include additional mechanisms such as registers, tests, and actions that allow the parser to store information and check conditions during parsing. These features enable ATNs to handle complex language structures effectively. ATNs are used in natural language understanding systems and sentence parsing.

✅ Now you have completed Unit-2 syllabus topics:

Grammars and Parsing

Top-Down and Bottom-Up Parsers

Transition Network Grammars

Feature Systems and Augmented Grammars

Morphological Analysis and Lexicon

Parsing with Features

Augmented Transition Networks

If you want, I can also give 4 very important exam questions from Unit-2 (based on previous NLP exam papers).